Boosting Data Mesh

Accelerate your Data Mesh Platform Implementation by 10x

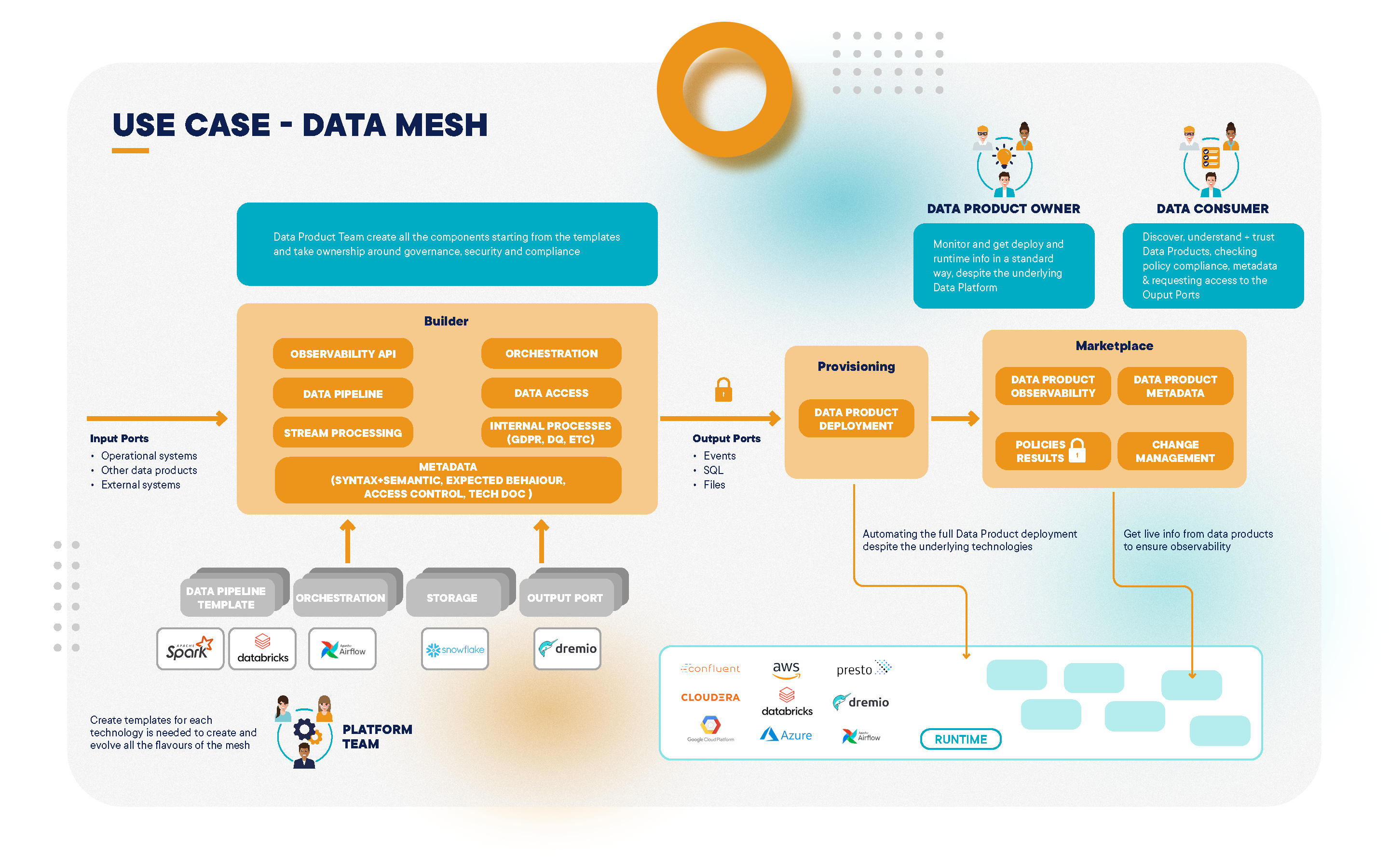

Witboost enhances Data Mesh adoption by creating the right trade-off between decentralization and governance.

Become a true enabler for Business Domains, creating a full self-service and governed experience to create interoperable Data Products.

Self-Service Data Platform

Data Infrastructure Plane

The underlying infrastructure of Data Products is automated, connecting with your existing data and tech stacks, while preserving architectural and security policies

Data Product Experience Plane

Each domain autonomously manages Data Product lifecycles, end-to-end, using templates and versioning, while ensuring compliance through governance guardrails

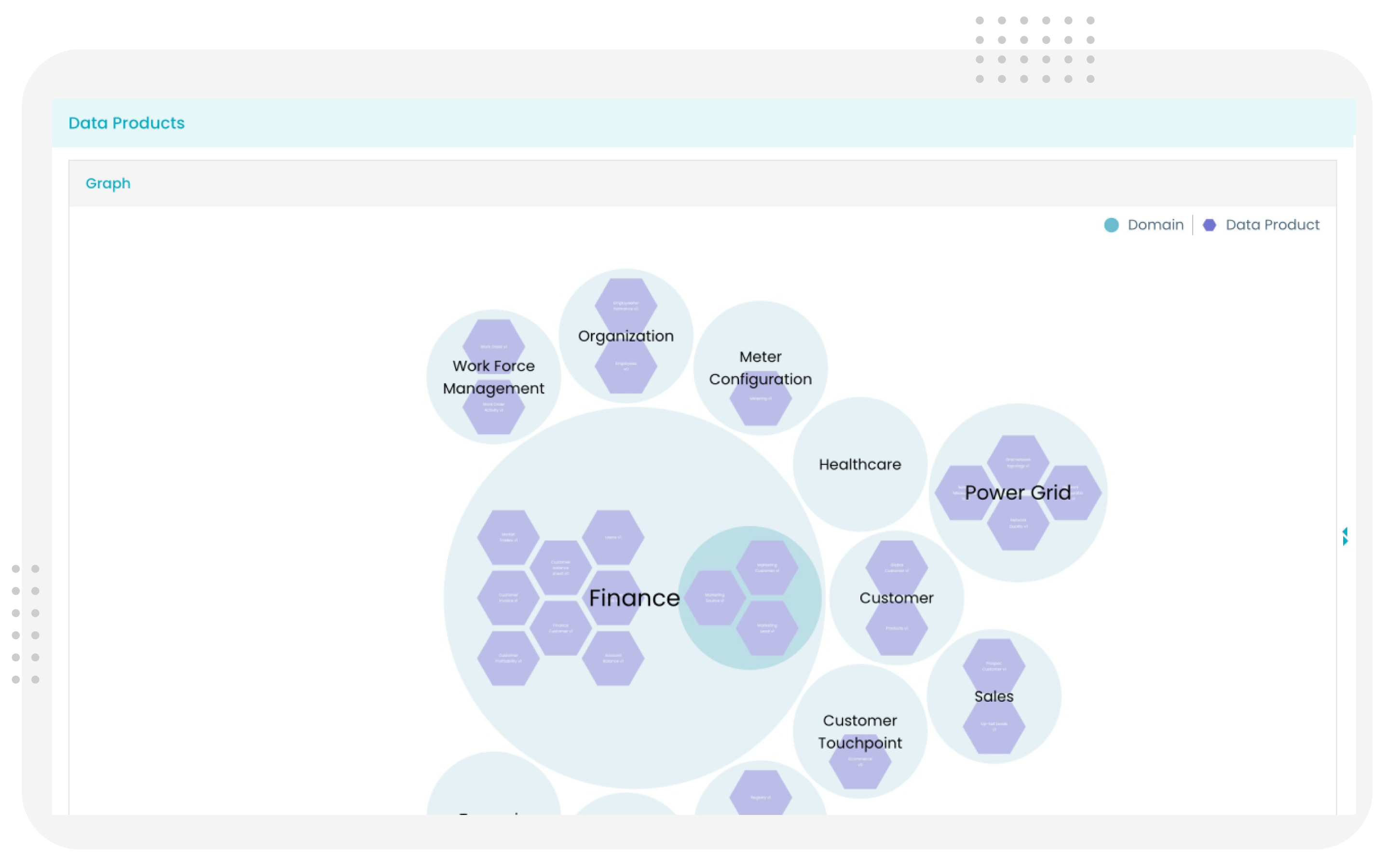

Mesh Supervision Plane

A visual graph of Data Products offers a top-down and relationships view in Witboost, integrating data access, observability, and business-driven data discovery

Streamlined Data Mesh

Federated Computational Governance

Witboost offers the possibility to integrate computational policies into the lifecycle of Data Products. Global and local policies allow to enforce governance at deploy and runtime

Domain-Oriented Ownership

Data Products can be created with full autonomy and clear ownership, leveraging templates and self-service capabilities provided by the Platform Team, enabling a speedy enterprise-wide adoption

Data as a Product

Blueprints and templates standardize the concept of Data as a Product and all their components, offering a clear and simple way to create and manage them as single atomic unit of deployment

Find Out How These Companies Adopted Data Mesh

Scania's Evolution toward Data Mesh

How Poste Italiane Leads it Journey to Data Mesh

How Enel Group Built a Data Mesh Architecture

Data Mesh Success Cases

Learn more about how these data-driven companies have used Witboost to steer their Data mesh Journey.

Witboost: Data Mesh Enabler

Domain oriented Ownership

A unified workspace for each domain with full autonomy in delivering value, while leveraging the platform's capabilities end-to-end.

Self-Service data infrastructure as a Platform

Profoundly automate your Mesh with Templates. Each has full provisioning automation along the entire data product lifecycle.

Federated Computational Governance

Enforce your governance with computational policies along the delivery process. All handled by the platform team.

Data as a Product

Standardize Data as a Product thinking with Templates and boost interoperability across data silos, ultimately breaking them down.

Witboost is the ideal platform upon which to build a Data Mesh solution for companies of any size. When Vishnu Chintamaneni, director of Engineering and Anjali Gugle, Product Security and Data Strategy Manager at Cisco (one of the largest technology companies in the world, ranking 74 on the Fortune 100 list) envisioned “Stellaris” - a new data and API platform based on the Data Mesh architecture, the choice was obvious: build it on the Witboost platform.

The project aims at solving the scalability problem of their current architecture, due to highly coupled pipeline decomposition, hyper-specialized ownership, and loss of context. All this leads to ineffective data management and weak governance, stifling productivity and innovation. The solution is a Data Mesh architecture built on Witboost.

This will allow Cisco to become more data-driven, democratize data, making it available securely for proper use, increase data literacy and data quality, enable visibility, clarify ownership, and provide transparency. In other words, the goal is to solve the issues presented by centralized, monolithic data lakes by treating domain-based data as the end-product. This will empower separate business domains to host and serve their datasets in an easily consumable way and also enable analytics that truly reap the benefits of its data.