Meteorological phenomena need data to be collected at a global level to capture the physical laws that govern nature.

In this case, the size of the phenomenon weather is global, thus it is evident the necessity to have global access to data related to meteorological events to get ready with it.

However, scientific research needs to share background, knowledge, and data with the whole scientific community to be reproducible — whether it is related to a natural phenomenon or machine learning technique.

The Open Data movement takes inspiration from the spirit of scientific research [1] to bring into any community the advantage of sharing content to improve the exploration and usage of data at a global level.

Open Data can be resumed in three main principles [2]:

• Availability: the data access has to be as free as possible and provided in a format that is suitable for further usage;

• Distribution: the data must be licensed under terms that allow for usage and distribution;

• Universality: the data can be accessed by anyone for any kind of (licit) usage.

Open Data has been broadly implemented and it is an active movement that is affecting the Digital Transformation at many levels.

How Open and Big Data work together?

Nowadays, many governments are driving Public Services to provide Open Data. This is going to enable public institutions, and private companies, to exploit those data to provide analytics, forecasts, and services to the public.

Nonetheless, Open Data is still an exception. As you can see looking at opendatabarometer.org, the coverage of countries having a good implementation of Open Data practices is very poor.

However, the trend is promising, and the near future is going to be fruitful for applications living on data.

The Big Data problem is traditionally described through Volume, Velocity, and Variety of the data to be handled [3]. Once these three dimensions growth indefinitely, data must be managed through specific techniques and technologies that work at this different scale of the problem.

This is the point where Open Data and Big Data get linked. During the last years, many data sets have been shared with the public by private and government parties. We may mention https://www.data.gov/ (USA), https://www.dati.gov.it/ (ITA) and also https://msropendata.com/ or https://ai.google/tools/datasets/. This is the sign there is space for research and commercial activities to exploit the power of data and Big Data gives us the way to get the best from it.

The connection between Open and Big Data has been explored since years ago and you can find many examples on the Internet that show you how to leverage Big Data practices to provide new services starting from Open Data.

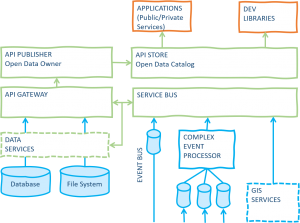

How does Open Data work? Here a diagram to depict a common set of boxes for Open Data architectures:

Many data sources can be ingested as real-time data streams or slow-changing data. Those data flows can be of very different kinds, just to mention a few of them:

• Sensors

• Historical data sets

• Categorical data sets

• File exchange

• GIS

Depending on the nature of the data streams, it may be useful to transform the received events through a proper system like CEP systems [4] or distributed frameworks for event processing at the convenience of the case.

Data can be collected from several sources

• Hospitals (births/deaths, diagnosis, etc.)

• Industries (pollution precursors, etc.)

• Agriculture / Fishing (pollution precursors, quality of goods, etc.)

• Business (shops, parking meter, traffic, etc.)

• Tourism (shops, produced garbage, etc.)

Once data has been collected (the gathering of those data is going to be a Big Data problem), they need to be transformed and delivered under a new shape. At a certain point, low-level message transformation is brought through message bus systems. The API Gateway provides a way to integrate data coming from different sources to reshape them under a standardized format ready to expose APIs. This way we can decouple the data integration from the serving layer. Sometimes, message buses and API gateways share the same core functionalities but deal with different scopes. The API publisher and store components complete the governance of the API lifecycle, and so of the Open Data usability:

• Publishing: The data owner can provide controlled access to data and guarantee their availability and integrity.

• The data consumer is going to access published data through standard protocols (SOAP/REST) through secure channels, authentication, and authorization layers.

The API store represents the catalog for the provided open data.

How to use Open Data? There are too many applications that can be implemented starting from Open Data initiatives, but I like to focus on services of public interest. Here some examples:

• Epidemiological forecasting

• Pollution forecasting

• Smart parking systems

Next time, we can go deeper into any of the scenarios we have mentioned in this article. Meanwhile, we will leave the reader with a couple of questions: does your city collect open data? How would you use this data to improve public services?

[1] https://opendatahandbook.org/guide/en/what-is-open-data/

[2] https://www.data.gov/blog/open-data-history

[3] https://www.slideshare.net/welkaim/big-data-architecture-hadoop-and-data-lake-part-1

[4] https://whatis.techtarget.com/definition/complex-event-processing-CEP

Posted by Ugo Ciracì

Business Unit Leader of Utilities. Ugo leads high-performing engineering teams and has a track record of successful digital transformation, DevOps and Big Data projects. He believes in servant and gentle leadership and cultivates architecture, organization, methodologies and agile practices.

LinkedIn

If you found this article useful, take a look at our Knowledge Base and follow us on our Medium Publication, Agile Lab Engineering!