Elite Data Engineering Manifesto

Learn about the principles of Elite Data Engineering that enhance modern data management practices.

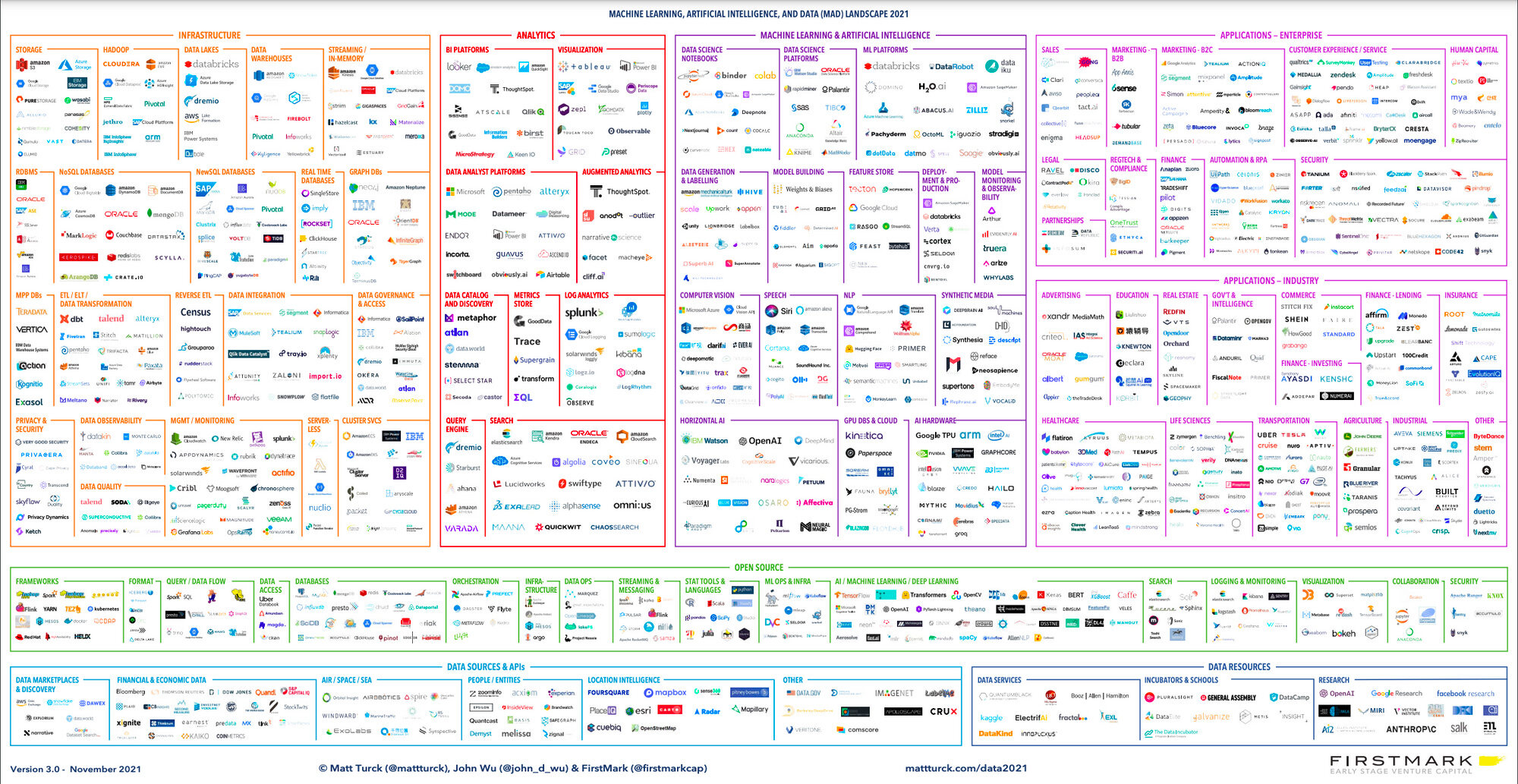

Data engineering is a dynamic and ever-evolving field, constantly shaped by new tools, cloud providers, and technological advancements. Reflecting on how things were done just five years ago compared to today, it becomes evident that the landscape has undergone a significant transformation.

The rapid introduction of new tools, cloud platforms, code-less solutions, ETL frameworks, reverse-ETL frameworks, and more can be overwhelming, leaving data engineers juggle with a complex and ever-expanding array of options.

While it may appear that the data engineering role has become primarily focused on tool selection and integration, aiming to construct data pipelines that satisfy customers or business units, this approach may only yield temporary success.

As organizations accumulate more data sources, complex transformations, and intricate processes, relying solely on tools without a structured methodology for handling data can lead to the formation of data swamps instead of efficient data lakes. Consequently, the data engineering team may face mounting challenges, leading to difficult and frustrating days at work. A survey conducted on the state of data engineering further supports this.

The underlying problem with this approach lies in treating data engineering as a mere collection of tools, each with its own ecosystem and specific requirements. The success or failure of data projects revolve not on the tools themselves, but rather on how we employ these tools in conjunction with best practices. Therefore, the path to success lies in adopting a risk-free approach that prioritizes practices over tools. But how can we ensure success without unnecessary risks?

In my opinion, the key to achieving success in data engineering is to return to the fundamentals, particularly by embracing the principles of software engineering.

In the world of software engineering, hyper-focusing on mastering one library isn’t as important as it might seem. In the rapidly changing world of IT, libraries and tools come and go like passing trends. Putting all the effort into just one library is a risky bet. Why? Because newer and better tools often swoop in, swiftly replacing the old ones.

For software engineers, the best bet is to build a strong foundation of knowledge and skills. When you’ve got the basics down, you can then smoothly adapt to new tools and learning their ins and outs in no time. And here’s the catch: if you’re all about using good programming practices, you’re basically giving your creations a stamp of quality.

In the end, the key isn’t all about being super focused on a single library. It’s about having a toolkit of essential skills that can handle whatever the software world put in your way. So, whether it’s Library A today or Tool B tomorrow, your software skills remain sharp, and producing high-quality software becomes the standard.

I find out the perfect analogy while playing tennis.

In tennis, the ultimate objective is to strike the ball in such a way that the opponent cannot return it. Regardless of the racket type or the string used (tempting as it may be to switch rackets every few months, hoping that the new racquet will make you a totally better player), the outcome remains largely unchanged if we have not dedicated ample time to practice and develop a solid foundation of fundamental techniques for striking the ball effectively.

In the end, some gains can be achieved while using a certain tool (racket or strings), but are negligible in the long run without practice.

Similarly, in data engineering, we must prioritize continuous learning and the application of good practices in our day-to-day work.

This approach helps us steer clear of undesirable situations.

These practices are derived from the core principles of software engineering, including:

In the world of data engineering, we can add this best practice that deserves a spotlight: meticulous management and cataloguing of an asset’s metadata. The purpose is to provide a comprehensive understanding of the data, its structure, origin, usage, and relationships to provide a context and structure to data, which in turn improves data quality, accelerates development, supports compliance, and enhances collaboration across teams.

It is a practice that tends to be overshadowed but plays a pivotal role in successful data projects.

What distinguishes these practices is their tool-agnostic nature. Those are not bound to specific frameworks, libraries, or tools and instead revolve around fundamental principles that guide the entire end-to-end development.

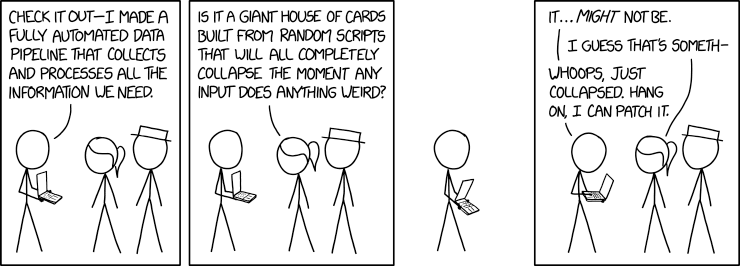

It’s tempting to jump headfirst into a project using a preferred tool and then retrofit these best practices. However, this approach often leads to unforeseen roadblocks.

Each tool comes with its own quirks and intricacies, making it challenging to seamlessly integrate these practices.

The heart of the issue centres around picking the right practice. Rather than bend over backwards a practice to fit a project, it’s more effective to let the chosen practice steer the project’s development. By allowing the practice to take precedence, we align our efforts with a proven path to successful project completion.

Although tools and technologies are undoubtedly important for a data project, following core data engineering practices is what truly sets the stage for a smooth and successful project. The secret is to allow these practices to lead our efforts, making sure that the project’s path is shaped by a clear practice, not the other way around.

For instance, now most no-code or low-code tools support versioning, during a tool assessment, the consideration that tools support a core practice that I choose can help the decision-making process.

To initiate the adoption of these practices, organizations should establish a dedicated cross-functional team, often referred to as the community of practice team. This team is responsible for identifying, discussing, and disseminating the best practices across all project teams. By involving members from different projects, organizations can foster knowledge sharing, collaboration, and the continuous improvement of data engineering practices (for starters, you can refer to this Elite Data Engineering article).

Furthermore, it is crucial to document the identified best practices in a comprehensive and easily accessible manner. This documentation should be readily available to all team members, enabling them to refer to the established practices at any time.

To ensure consistency and adherence to best practices, organizations can define a set of policies that outline the expected standards and procedures for data engineering projects. These policies serve as guiding principles for the teams, helping to maintain reproducibility, consistency, and high-quality outputs. For example, we can require that all data projects needs to publish metadata on a data catalogue.

To further streamline the implementation of these practices, organizations can leverage automation tools and technologies. By automating processes, teams can reduce the likelihood of human error and ensure the consistent application of best practices.

It is important to recognize that while tools may change over time, the logical definitions defined following the best practices at the project(s) kickoff, will not change. The mapping of the defined policies to new tools becomes a straightforward process, enabling seamless transitions while preserving the integrity and effectiveness of the established practices.

In believe the success of data engineering projects relies on embracing best practices derived from software engineering fundamentals. While tools play a crucial role, it is the application of these practices that ultimately ensures the delivery of high-quality data engineering projects.

By placing practices at the forefront and emphasizing their importance, organizations can adapt to evolving technologies and requirements, minimize risks, and achieve long-term success.

Learn about the principles of Elite Data Engineering that enhance modern data management practices.

Unlock productivity & reduce cognitive load for data engineers with platform engineering. Streamline processes, enforce best practices, & automate...

Learn how to manage cloud costs effectively within a Data Mesh framework using FinOps principles and best practices.